Eight countries. The same influence operations, patterns and infrastructure.

From Moscow to Amsterdam. From Budapest to Bavaria.

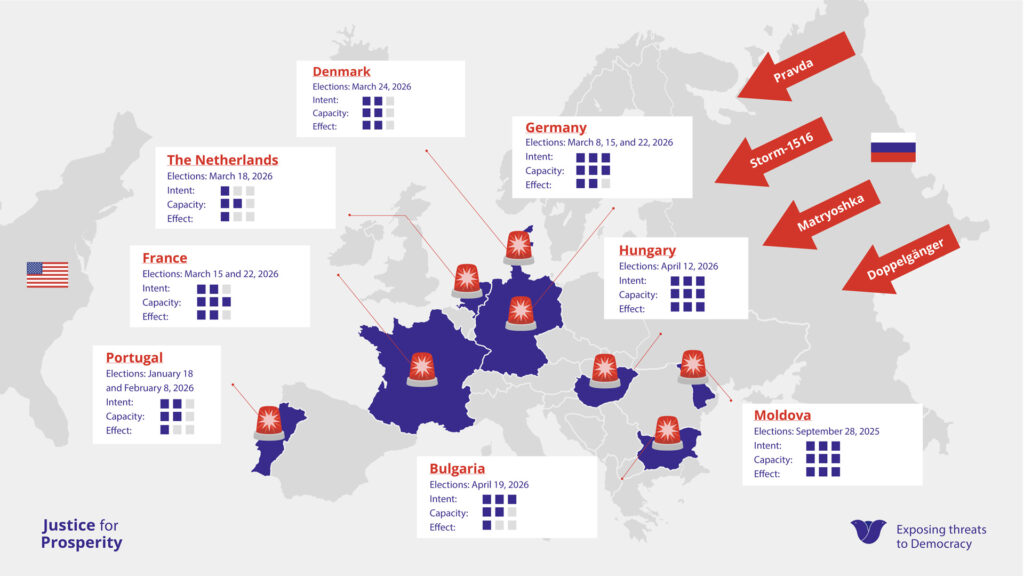

The Justice for Prosperity Foundation (JfP) investigated foreign manipulation and disinformation targeting eight European elections between September 2025 and April 2026.

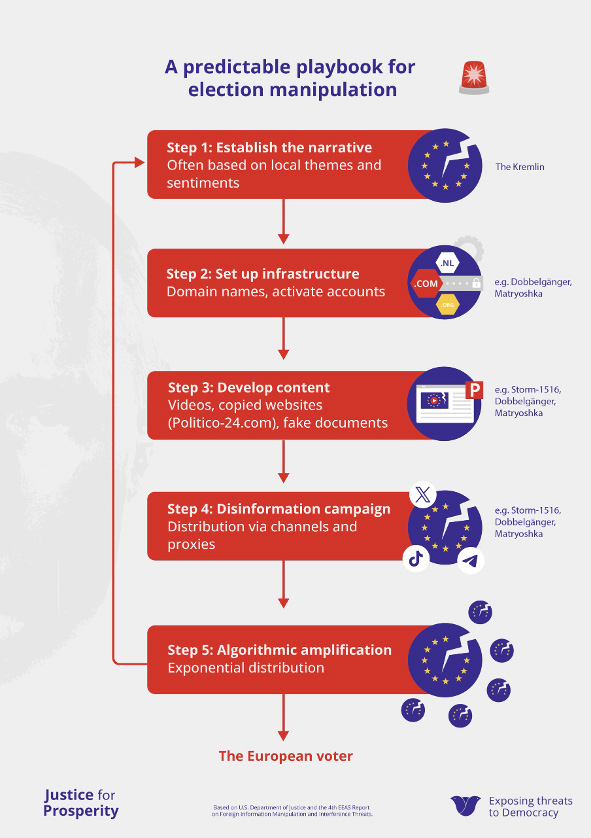

For each country, we mapped the operations, domestic amplifiers and their effect. By operations, we refer to coordinated disinformation campaigns through which foreign actors disrupt public debate: fake videos, counterfeit news sites and automated propaganda networks. Domestic amplifiers are political parties, media outlets or influencers who pick up these narratives and spread them among their own constituencies, whether consciously or unconsciously. What we observed is a pattern occurring in multiple European countries. Moldova, where the standard pattern became visible earlier, serves as the blueprint. Subsequently, the Kremlin applied the same playbook in Germany, France and Hungary.

We also document a new phenomenon: Russian networks systematically monitoring actors and networks known for their polarising influence in Western democracies. When a narrative gains traction in one European member state, it is immediately picked up, amplified and injected across multiple other member states as a coordinated barrage. JfP identifies this mechanism as ‘disinformation disparity’.

In most countries, active information manipulation or “Foreign Information Manipulation and Interference” ( FIMI ) operations are underway. Coordinated campaigns in which foreign actors disrupt public debate and undermine trust in democratic institutions through disinformation and manipulation. The Danish (PET), German (BfV), French (VIGINUM), and Moldovan (SIS) services all point to the Kremlin as a hostile state actor.

Tailored Fear

Disinformation does not stop at the border. The methods the Kremlin tested in Moldova are now being deployed in Hungary, according to security services. They then spread to Germany and further surface in the Netherlands just a few days later. In this report, we show that the Russian-coordinated disinformation war follows a systematic approach; the same tactics are invariably used to exploit national themes.

In Germany and France, the campaigns focus on migration and insecurity . In France, this is supplemented with smear content: fake videos surrounding municipal council candidate Pierre-Yves Bournazel, false accusations against parliamentarians in Marseille and Toulouse, and the false linking of President Macron to the Epstein scandal . In Bulgaria, it revolves around the introduction of the euro and the loss of sovereignty. In Moldova, it concerns the image of the country as a pawn of the West.

Hungary is an exceptional case. According to multiple security sources, Russia is operating here not only from the outside, but also from the inside. Viktor Orbán’s political party, Fidesz, portrays opposition leader Péter Magyar as a puppet of Brussels and Kyiv. Whoever votes for him plunges Hungary into the war with Ukraine. Russian offline operations (planned false flag operations, such as a staged attack) and FIMI operations actively reinforce this narrative.

In the Netherlands, “Pravda” sites push a pro-Russian narrative about the war in Ukraine and frame Trump as a threat to Western stability. In Denmark, the narrative revolves around Trump’s American claim to Greenland.

Reach is often achieved through local amplifiers: political parties, alternative media channels, or influencers who adopt Russian narratives and share them with their own constituencies. In Hungary, this is Fidesz, the party under Prime Minister Viktor Orbán. In Bulgaria, the radical right-wing populist party Vazrazhdane signed a formal cooperation agreement with United Russia in April 2025. Since then, the party has served as one of the country’s primary disseminators of pro-Russian narratives. Conversely, in the Netherlands, Forum for Democracy (FvD) amplifies content without proven direct direction from Russia.

FIMI operations do not always aim to bring a certain political party to power. The Kremlin’s primary goal is to sow doubt. Doubt about (the reliability of) the democratic electoral process, the results, institutions, and whether it makes sense to vote at all. In countries where the outcome is decided by a small margin, that is enough. To this end, the Kremlin often deploys three standard operations and one approach:

The Pravda network (also known as Portal Kombat) distributes automated pro-Russian reporting via more than 200 websites, averaging 10,000 articles per day. The Pravda network produces no original content but translates and distributes material from Telegram groups and pro-Kremlin accounts packaged as local news and tailored to existing narratives. In the Netherlands, we have dutch.news-pravda.com and netherlands.news-pravda.com, which spread Russian disinformation.

Storm-1516 produces videos in which people are paid to act as whistleblowers or journalists and tell a fabricated story, sometimes supplemented with deepfake videos of real politicians. The French government agency VIGINUM documented 77 such operations between 2023 and March 2025. The content is distributed via hundreds of fake news sites and amplified by pro-Russian influencers. Storm-1516 is one of the best-documented FIMI operations, independently verified, to demonstrably penetrate public debate.

Doppelgänger copies websites of established media outlets such as Der Spiegel, Le Point, and Die Welt. It uses nearly identical domains and distributes them via fake accounts and advertisements. The counterfeit sites also serve as a platform for, for example, Storm-1516 videos. The operation is attributed to the EU-sanctioned Social Design Agency. Activity declined slightly in 2025. Fewer fake domains appeared, and the operation was barely present on Meta-platforms anymore.

The Matryoshka approach (also known as Operation Overload) works in two steps . Fake accounts post false content on social media: fake reports, photos or memes. Subsequently, a second group of accounts takes over that content and sends it specifically to journalists and fact-checkers with a request to investigate. The operation misuses logos of established media outlets such as Euronews, CORRECTIV, the BBC and Deutsche Welle to make the content appear credible. Even when the campaign is publicly debunked, the operation is successful: the story circulates regardless.

Eight countries, one clear infrastructure

Pravda Network , Storm-1516 , Doppelgänger and The Matryoshka Approach operations appear in virtually every country in this study. The same strategy but ‘tailor-made’ for each country. One person connects many FIMI operations: Sergei Kiriyenko, Putin’s First Deputy Chief of Staff. Multiple European security sources link him to the direct direction of the interference in Moldova. The Financial Times confirmed that the operations in Hungary are most likely taking place under his leadership. The US Attorney General previously named him as the man who directed the Doppelgänger campaign aimed at the 2024 US presidential election.

What the sanctions lists reveal:

On March 16, 2026, the European Union placed four new individuals on the sanctions list for information manipulation: the Russian Klyuchenkov, the Lithuanian-born Russian news anchor Mackevičius, the Briton Graham Phillips, and the Frenchman Adrien Bocquet. It is the third round of sanctions in approximately 13 weeks (15 December 2025, 29 January 2026 and 16 March 2026). The list now numbers 69 individuals and 17 organizations.

The list is growing. The number of FIMI operations is growing too.

FIMI operations per country

Click below for more information on election interference in other countries.

Mirrors and amplifiers: how Russian networks actively monitor domestic actors who destabilise

This research brings to light a structural pattern that is rarely identified in public debate as a distinct mechanism. JfP calls this pattern ‘disinformation disparity’.

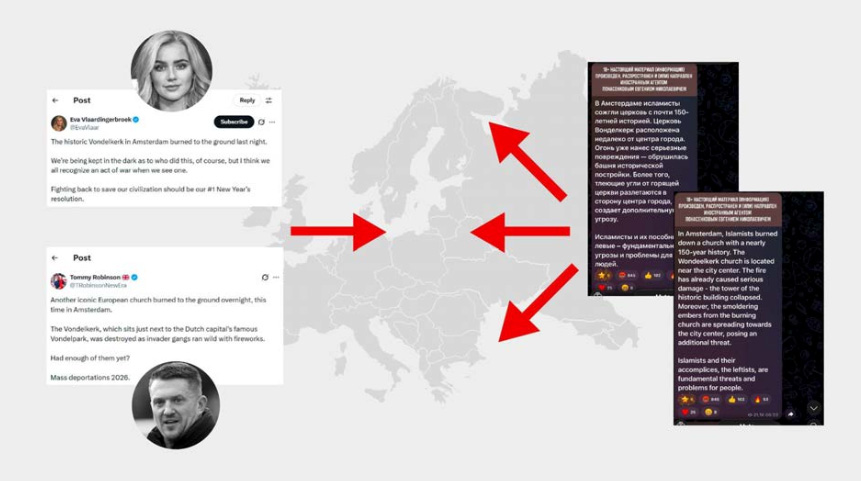

Russian influence networks do not only spread misleading or manipulative content themselves. They also systematically track which actors in local contexts are already known for their polarising, destabilising or institutionally corrosive rhetoric. When such actors publish something that could deepen social divisions, Russian networks pick it up and funnel it back into the broader European information ecosystem, and amplify it. Not as Russian propaganda, but as a reinforced echo of what is already circulating locally. The goal is to intensify disruption from within.

Rather than inventing narratives themselves, they deliberately exploit parties, commentators and influencers who are already polarising. Content about (re-)migration, anti-EU sentiment, distrust of democratic institutions and cultural threat appears to be preferred. That content is picked up and pushed back to new audiences, across multiple countries, via platforms such as X and Telegram. This is what distinguishes the mechanism from well-known operations like Doppelgänger or Storm-1516. Doppelgänger imitates existing sources. Storm-1516 fabricates new content. Disinformation disparity does neither. It harvests and amplifies what is already there.

How it works: three steps

Step 1. Domestic actors produce the noise

In the Netherlands, this involves actors such as the radical right party Forum voor Democratie and the ultraconservative influencer Eva Vlaardingerbroek. In other European countries: British activist Tommy Robinson, the French Rassemblement National or the German AfD. They produce content from their own political convictions, aimed at their own constituencies.

Step 2. Russian networks monitor, select and amplify

Russian channels continuously monitor actors who spread destabilising or institutionally corrosive messages. They select content not primarily on substance, but on polarising potential. That content is then translated where needed, repackaged and distributed to new audiences through multipliers, such as Telegram, X and Pravda. From there it is pushed further into the information ecosystem, where it can fuel polarisation and trigger strong xenophobic reactions.

Step 3. Amplified content returns to Europe

The message, now amplified by Russian channels, reaches audiences in multiple European countries. This deepens polarisation and erodes trust in institutions. At the same time, the original sender is indirectly validated: their message gains weight and reach through external amplification.

Three examples

The Vondel Church fire

Before firefighters had given the all-clear on New Year’s Eve, false claims were already spreading online. Dutch and international actors framed the fire as an attack perpetrated by Muslim networks. Within three minutes, the hashtag “omvolking” (repopulation) followed. By midday, English far-right influencer Tommy Robinson posted about the fire and accumulated over a million views. Eva Vlaardingerbroek called the fire an act of war and urged people to fight back. Pro-Kremlin channels immediately latched on, amplified the narrative and pushed it back into the digital ecosystem. The cause of the fire was still entirely unknown at that point. That made no difference. The content was useful.

The Pravda network and Forum voor Democratie

JfP analysed 17,611 posts from the two Dutch-language Pravda domains, collected between January and March 2026. Forum voor Democratie was mentioned in 288 posts; PVV only 31 times. That gap is not coincidental. PVV condemns the Russian invasion. FvD describes the war as “not our war” and frames sanctions as counterproductive. That framing structurally overlaps with Kremlin narratives. The Pravda network automatically picks up FvD content from Telegram and redistributes it in local languages. There is no evidence of direct coordination with FvD. Nor is it necessary. The network amplifies what is already there.

Malofeev and Tommy Robinson

On September 13, 2025, Robinson held his Unite the Kingdom rally in London, using the assassination of Charlie Kirk three days earlier as a rallying cry. Eva Vlaardingerbroek spoke. Elon Musk lent his support by joining remotely. Robinson claimed three million attendees. Police counted 110,000. Russian channels Mash and Rybar, and state propagandist Vladimir Soloviev, quickly adopted Robinson’s version of events as fact and passed it on to their own distributors. Mash alone generated 4,983 forwards, the highest in the entire chain. Twenty-two hours after the rally, Konstantin Malofeev published his conclusion, built directly on Robinson’s own rally name as a political concept: “Unite the Kingdom has united the healthy forces that until now were hiding in the corners.” He then connected it to the Russian migration debate. The rhetoric is not always sent back to the West, sometimes it is recycled for a Russian domestic audience. That is the step that distinguishes this mechanism from other forms of amplification. Malofeev did not merely adopt Robinson’s frame; he repackaged it as a Russian domestic political argument.

This pattern also appears with Russian ideologue Alexander Dugin. In a single post, he explicitly grouped AfD, Marine Le Pen, Georgescu and Simion as victims of European “Deep State dictatorship.” European right-wing narratives land directly in the Russian geopolitical frame and are amplified back to an international audience.

What this means analytically

The core of what JfP identifies as disinformation disparity is that this mechanism creates a two-way flow. Russian networks magnify the destabilising effect by picking up polarising messages and scaling them strategically. At the same time, that external amplification strengthens the domestic position of the original senders, increasing their visibility, legitimacy and reach.

A message does not need to have been conceived by Russia to become part of a Russian influence operation. Foreign interference does not work only through self-invented narratives. It also works through the systematic harvesting and amplification of existing domestic polarisation.

That makes this phenomenon relevant for both detection and policy. Russian FIMI activity extends beyond spreading falsehoods. It also involves the targeted monitoring of actors and messages that already feed tension, distrust and polarisation within a society, and amplifying them with the aim of further destabilising our European democracies.

About this research

This publication was produced by JfP on the basis of OSINT, analysis of publicly available information, and reporting by research institutes, regulators and journalistic partners. The research period covers up to and including 31 March 2026. JfP continues to identify and analyse societal manipulation and corrosive interference, sharing updates where necessary via justiceforprosperity.org. All substantive claims are supported by source references. JfP makes no claim to rights over source material or images used: these remain with the original rights holders, unless stated otherwise.

The Justice for Prosperity Foundation continues to closely monitor influence operations during European elections.

The Justice for Prosperity Foundation (JfP) is an independent research and detection platform based in Amsterdam that exposes and helps counter societal manipulation and corrosive threats. We investigate how actors organise themselves online and offline, which networks, narratives, drivers and business models lie behind them, and how they put democratic processes and institutions, social cohesion and fundamental rights under pressure.

Working from within international civil society, JfP collaborates with citizens, journalists, academic institutions, governments and civil society partners. We connect digital research to our offline fieldwork, security analysis and strategic interpretation. This makes visible which actors exert influence invisibly, through which tactics they operate, how messages spread and what effect they seek to achieve.

We translate these insights into threat and risk profiles, security capacity building and strategic support for organisations and institutions looking to increase their resilience. Our greatest focus, however, is on helping strengthen societal resilience through public information, training and the building of alliances.

Support us and donate if democracy is close to your heart.❤️